legacy-wiki

Lab cluster

Recovered from the older tannerjc.net wiki snapshot dated January 23, 2016.

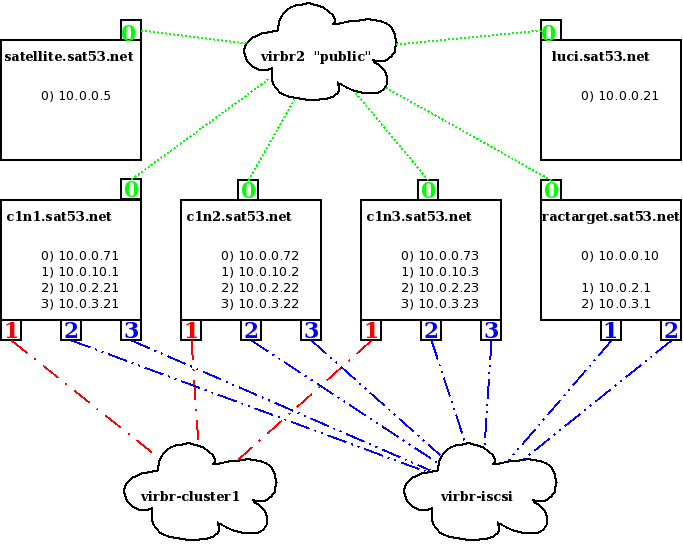

Infrastructure

Documentation

http://www.redhat.com/docs/en-US/Red_Hat_Enterprise_Linux/5.4/html/Cluster_Administration/index.html

create DNS records

[root@satellite ~]# fgrep c1 /var/named/chroot/var/named/data/sat53.net.hosts

c1n1.sat53.net. IN A 10.0.0.71

c1n2.sat53.net. IN A 10.0.0.72

c1n3.sat53.net. IN A 10.0.0.73

[root@satellite ~]# arp -a | sort -n

? (10.0.0.110) at 00:16:3E:46:FB:B9 [ether] on eth1 --node1

? (10.0.0.111) at 00:16:3E:13:0F:B7 [ether] on eth1 --node2

? (10.0.0.112) at 00:16:3E:10:B6:2F [ether] on eth1 --node3

sasha.dj.edm (192.168.1.14) at 82:96:E4:3F:0A:E8 [ether] on eth0

trainwreck.dj.edm (192.168.1.1) at 00:0E:0C:9F:FC:A1 [ether] on eth0

[root@satellite ~]#

Create heartbeat network [virbr-cluster1]

http://kbase.redhat.com/faq/docs/DOC-9766

[root@sasha ~]# cat virbr-cluster1.xml

network

namevirbr-cluster1/name

uuid/uuid

bridge forwarddelay=0 stp=on name=virbr11

ip netmask=255.255.255.0 address=10.0.10.1

/ip

/bridge

/network

[root@sasha ~]# virsh

Welcome to virsh, the virtualization interactive terminal.

Type: 'help' for help with commands

'quit' to quit

virsh # net-define /root/virbr-cluster1.xml

Network virbr-cluster1 defined from /root/virbr-cluster1.xml

virsh # net-start virbr-cluster1

Network virbr-cluster1 started

virsh # net-list

Name State Autostart

-----------------------------------------

default active yes

virbr-cluster1 active no

virbr-iscsi active yes

virbr-rac active yes

virbr-sat4 active yes

virbr-sat5 active yes

virsh # net-autostart virbr-cluster1

Network virbr-cluster1 marked as autostarted

virsh # quit

[root@sasha ~]# ifconfig -a | fgrep virbr

virbr0 Link encap:Ethernet HWaddr 00:00:00:00:00:00

virbr1 Link encap:Ethernet HWaddr 00:00:00:00:00:00

virbr2 Link encap:Ethernet HWaddr 1E:07:A8:F1:FB:37

virbr4 Link encap:Ethernet HWaddr 00:00:00:00:00:00

virbr5 Link encap:Ethernet HWaddr 4E:66:64:74:9C:36

virbr11 Link encap:Ethernet HWaddr 3E:B3:FB:3A:F4:76

Shared Storage

- Execute commands on ractarget …

#create target

tgtadm --lld iscsi --op new --mode target --tid 4 -T iqn.2010-28.com.example:cluster1disk1

#add disk to target

tgtadm --lld iscsi --op new --mode logicalunit --tid 4 --lun 1 -b /dev/sdd

#node1

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.2.21

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.3.21

#node2

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.2.22

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.3.22

#node3

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.2.23

tgtadm --lld iscsi --op bind --mode target --tid 4 -I 10.0.3.23

-

Add commands to /etc/rc.local

-

Check exports

[root@ractarget ~]# tgtadm --lld iscsi --op show --mode target | fgrep Target

Target 1: iqn.2010-28.com.example:ocr

Target 2: iqn.2010-28.com.example:voting

Target 3: iqn.2010-28.com.example:asm

Target 4: iqn.2010-28.com.example:cluster1disk1

Create management node (luci)

build guest with koan

koan --server=192.168.1.61 --virt --profile=rhel5clusternode:1:RedHatdeArgentinaSA --virt-bridge=virbr2 --virt-name=cluster1-luci

- Fix networking

[root@localhost ~]# cat /etc/sysconfig/network

NETWORKING=yes

NETWORKING_IPV6=yes

HOSTNAME=luci.sat53.net

GATEWAY=10.0.0.5

- yum -y install luci; chkconfig luci on

[root@luci ~]# luci_admin init

Initializing the luci server

Creating the 'admin' user

Enter password:

Confirm password:

Please wait...

The admin password has been successfully set.

Generating SSL certificates...

The luci server has been successfully initialized

You must restart the luci server for changes to take effect.

Run service luci restart to do so

[root@luci ~]#

[root@luci ~]# service luci restart

Shutting down luci: [ OK ]

Starting luci: Generating https SSL certificates... done

[ OK ]

Point your web browser to https://luci.sat53.net:8084 to access luci

[root@luci ~]#

Create cluster nodes

Kickstart file

# Kickstart config file generated by RHN Satellite Config Management

# Profile Label : rhel5clusternode

# Date Created : 2010-05-11 13:43:57.0

install

text

network --bootproto dhcp

url --url http://satellite.sat53.net/ks/dist/ks-rhel-x86_64-server-5-u4

lang en_US

keyboard us

zerombr

clearpart --all

bootloader --location mbr

timezone America/New_York

auth --enablemd5 --enableshadow

rootpw --iscrypted $1$JnqtgwiW$n49M1ljMD/X1yexKunf1e0

selinux --disabled

reboot

firewall --disabled

skipx

repo --name=VT --baseurl=http://satellite.sat53.net/ks/dist/ks-rhel-x86_64-server-5-u4/VT

key --skip

autopart

%packages

@ Base

build guests via koan

koan --server=192.168.1.61 --virt --profile=rhel5clusternode:1:RedHatdeArgentinaSA --virt-bridge=virbr2 --virt-name=cluster1-node1

koan --server=192.168.1.61 --virt --profile=rhel5clusternode:1:RedHatdeArgentinaSA --virt-bridge=virbr2 --virt-name=cluster1-node2

koan --server=192.168.1.61 --virt --profile=rhel5clusternode:1:RedHatdeArgentinaSA --virt-bridge=virbr2 --virt-name=cluster1-node3

Setup IP interfaces

- manually add 1 NIC with the virbr-cluster1 network

- manually add 2 NICs with the virbr-isci network

- Fix guests’ configuration files or deploy with a unique configuration channel for each machine

- /etc/resolv.conf

- /etc/sysconfig/network

- /etc/sysconfig/network-scripts/ifcfg-eth0 [public]

- /etc/sysconfig/network-scripts/ifcfg-eth1 [heartbeat]

- /etc/sysconfig/network-scripts/ifcfg-eth2 [iscsi path1]

- /etc/sysconfig/network-scripts/ifcfg-eth3 [iscsi path2]

configure ntp

- date -s hh:mm:ss

- hwclock –systohc

- yum -y install ntp

- edit /etc/ntp.conf

[root@c1n1 ~]# cat /etc/ntp.conf | fgrep server | egrep -v ^\#

server satellite.sat53.net

server 127.127.1.0 # local clock

- edit /etc/sysconfig/ntp

[root@c1n1 ~]# cat /etc/sysconfig/ntpd | egrep -v ^\$

# Drop root to id 'ntp:ntp' by default.

OPTIONS=-u ntp:ntp -p /var/run/ntpd.pid

# Set to 'yes' to sync hw clock after successful ntpdate

SYNC_HWCLOCK=yes

# Additional options for ntpdate

NTPDATE_OPTIONS=-x

- chkconfig ntpd on; service ntpd start

login to iscsi target

- subscribe to cluster-storage child channel

- send remote commands to all nodes

service network restart

yum -y install sg3_utils iscsi-initiator-utils; chkconfig iscsi on; service iscsi start

iscsiadm -m discovery -t sendtargets -p 10.0.2.1

iscsiadm -m discovery -t sendtargets -p 10.0.2.1 -l

iscsiadm -m discovery -t sendtargets -p 10.0.3.1

iscsiadm -m discovery -t sendtargets -p 10.0.3.1 -l

- Verify that there is an individual disk for each path

[root@c1n1 ~]# iscsiadm --mode node

10.0.3.1:3260,1 iqn.2010-28.com.example:cluster1disk1

10.0.2.1:3260,1 iqn.2010-28.com.example:cluster1disk1

[root@c1n1 ~]# sg_map -i -x

/dev/sg0 0 0 0 0 12 IET Controller 0001

/dev/sg1 0 0 0 1 0 /dev/sda IET VIRTUAL-DISK 0001

/dev/sg2 1 0 0 0 12 IET Controller 0001

/dev/sg3 1 0 0 1 0 /dev/sdb IET VIRTUAL-DISK 0001

- yum -y install device-mapper-multipath; chkconfig multipathd on

- cat /etc/multipath.conf | sed -e ’s/devnode/#devnode/g’ /etc/multipath.conf.tmp; mv -f /etc/multipath.conf.tmp /etc/multipath.conf; multipath -v4

[root@c1n1 ~]# multipath -ll

mpath0 (1IET_00040001) dm-2 IET,VIRTUAL-DISK

[size=7.8G][features=0][hwhandler=0][rw]

\_ round-robin 0 [prio=1][active]

\_ 2:0:0:1 sda 8:0 [active][ready]

\_ round-robin 0 [prio=1][enabled]

\_ 3:0:0:1 sdb 8:16 [active][ready]

Install ricci

- yum -y install ricci; chkconfig ricci on; service ricci start

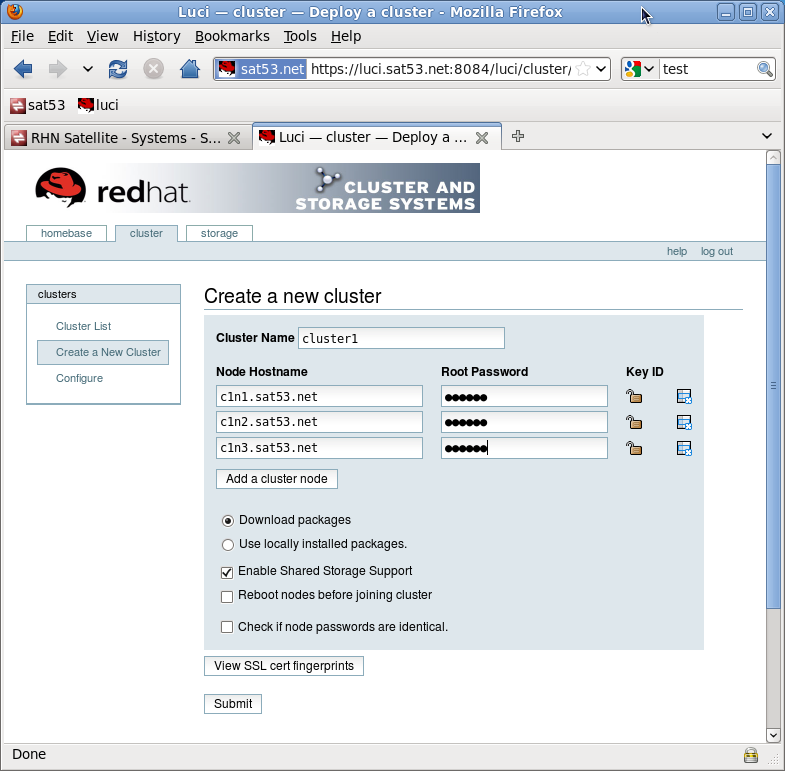

Create cluster with luci

Add nodes to a new cluster

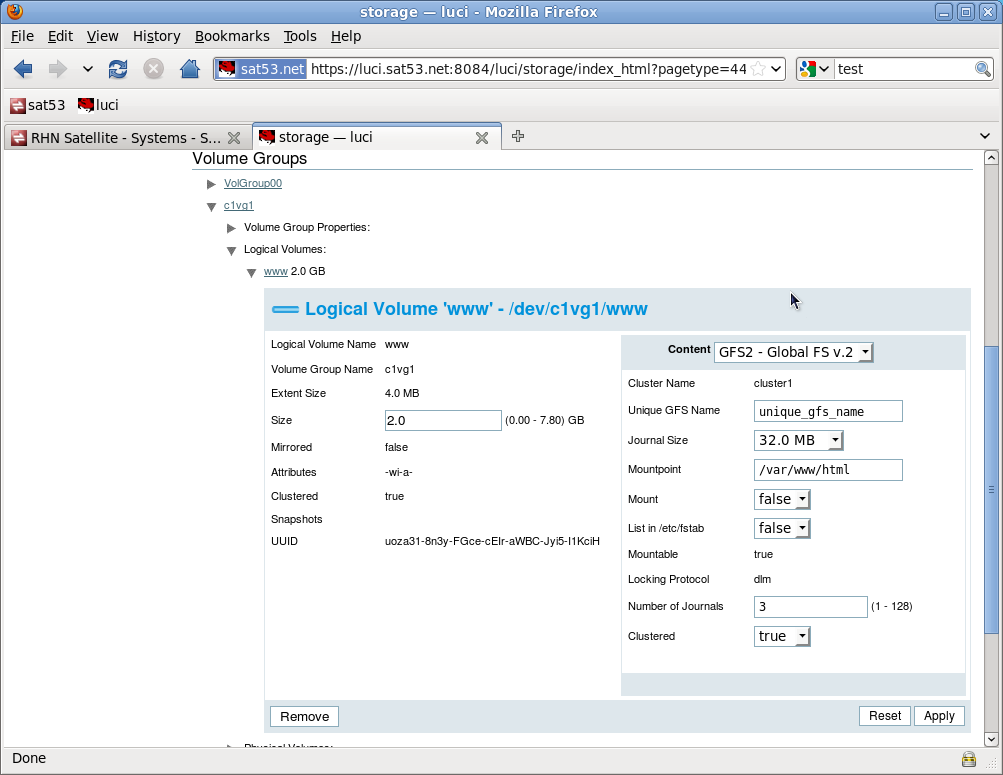

create clustered volume group

[root@c1n3 ~]# pvcreate /dev/mapper/mpath0

Physical volume /dev/mapper/mpath0 successfully created

[root@c1n3 ~]# vgcreate c1vg1 /dev/mapper/mpath0

Clustered volume group c1vg1 successfully created

[root@c1n3 ~]# lvcreate -n www -L 2G c1vg1

Logical volume www created

create gfs2 filesystem

- mount on all nodes via luci

- check mounts

[root@c1n1 ~]# mount | fgrep gfs2

/dev/mapper/c1vg1-www on /var/www/html type gfs2 (rw,hostdata=jid=0:id=262146:first=1)

[root@c1n2 ~]# mount | fgrep gfs2

/dev/mapper/c1vg1-www on /var/www/html type gfs2 (rw,hostdata=jid=1:id=262146:first=0)

[root@c1n3 ~]# mount | fgrep gfs2

/dev/mapper/c1vg1-www on /var/www/html type gfs2 (rw,hostdata=jid=2:id=262146:first=0)

Add clustered httpd

- yum -y install httpd